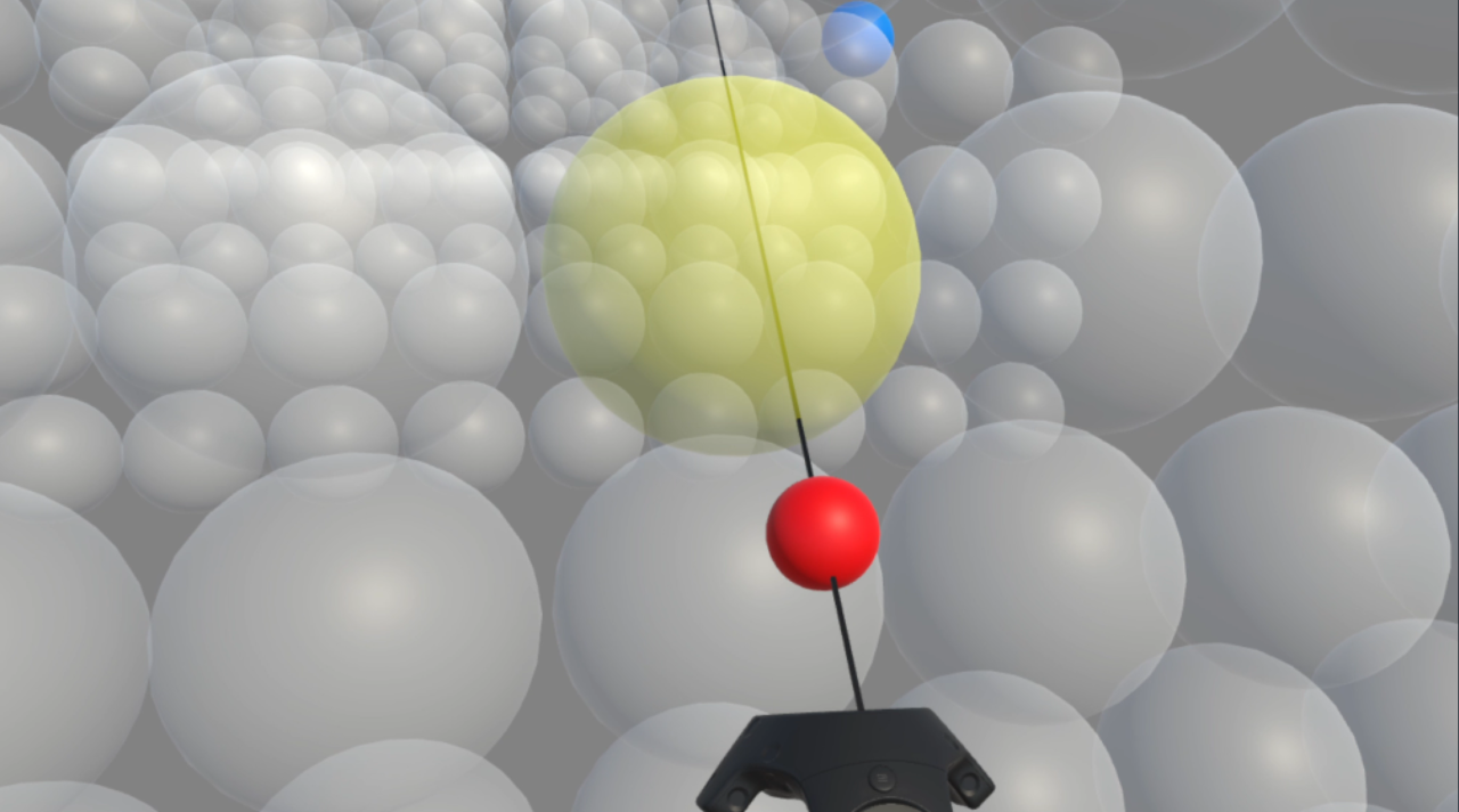

GazeRayCursor: Facilitating Virtual Reality Target Selection by Blending Gaze and Controller Raycasting

Di Laura Chen, Marcello Giordano, Hrvoje Benko, Tovi Grossman, and Stephanie Santosa. In 29th ACM Symposium on Virtual Reality Software and Technology (VRST), October 2023. [PDF] [30-Sec Teaser] [Video] [Presentation]